A statement that has been circulating on Twitter recently:

“Thinking can be outsourced. Understanding cannot.”

An understanding of Engineering fundamentals will remain valuable, even as LLMs’ growing ability to generate plausible code and text.

Start with linear regression

Neural networks approximate functions.

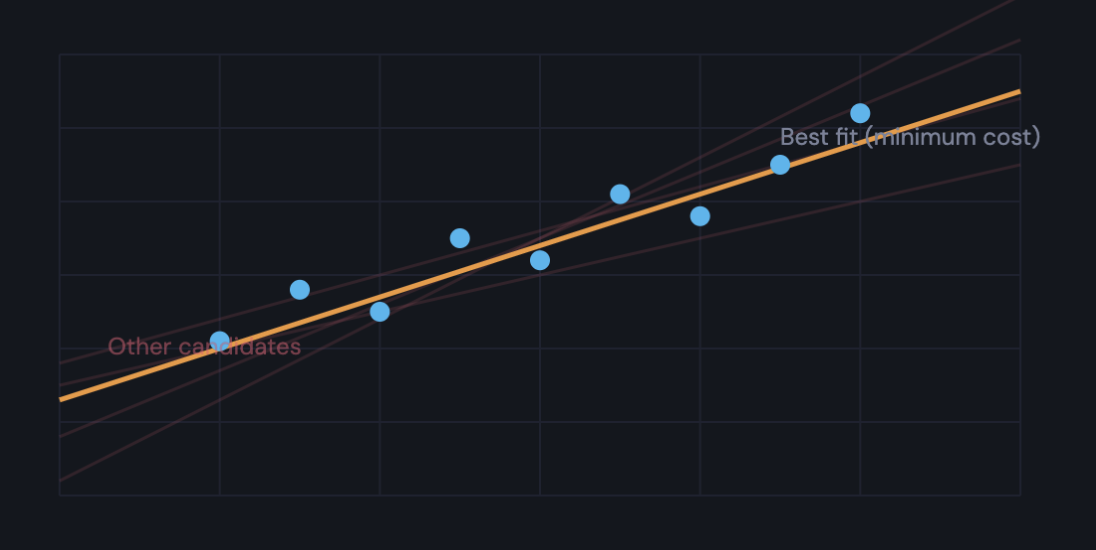

Linear regression is the simplest mathematical approach to achieve the same. A cloud of points and an infinite number of lines. What is the best-fitting line among them? The one where the distance between its predictions and the actual points is minimum.

That distance has a name: the loss. Linear regression fits the line by minimizing it. Pick weights → measure the loss → adjust → repeat.

Neural networks build on the same concept.

A neuron is just a line, bent

Linear regression can only fit straight lines. Real data isn’t straight.

A neuron takes w · x + b and runs the result through a curved function (a sigmoid). That single bend is the entire upgrade. Now you can fit S-curves. Stack two neurons and you can fit bumps. Stack thousands across many layers and you can fit anything.

The training algorithm is the same as linear regression: gradient descent. The only addition is backpropagation — the chain rule from calculus, applied backwards to figure out how each weight contributes to the loss.

Scale this up

Stack thousands of these neurons. Add an attention mechanism so each neuron dynamically picks which inputs from the previous layer to care about. Train on the internet.

You get GPT. We will dive deeper into that in my next post.

Full interactive guide with the live code and a training playground: